Rate Limiting and Other Networking Adventures

Last year, I was on a hunt for interesting programming projects to work on. A girl can only build so many websites and make API calls. So I decided to dive in by building a series of networking mini-projects, not with the goal of creating anything particularly complex, but to understand how things worked under the hood.

I’ve also included more detailed notes/thoughts in the respective project subfolder on my GitHub repo

Rate Limiting

Fun fact, I was actually asked to implement rate limiting in an interview! It was the first technical interview I had ever done, and the furthest round I had gotten to with any company at that point in time. And I absolutely butchered it. I didn’t truly understand how rate limiting worked and kept throwing incredibly uneducated educated shots in the dark.

This mini-project was my redemption arc as I explored some of the common rate-limiting strategies.

Token/Leaky Bucket

Tokens are added/removed from a bucket at a fixed rate, up to a maximum capacity. Each request consumes a token. If tokens are available, the request is processed; otherwise, it is rejected or delayed. This model handles traffic bursts well, since short spikes can be absorbed as long as the bucket has tokens.

Fixed Window

A fixed number of requests are allowed within a defined time interval (100 requests per min). Once the limit is reached within that interval, additional requests are dropped until the next window begins.

Sliding Window Log

Each request is timestamped. The system maintains a rolling time window and only counts requests within that window. This provides more accurate limiting but can be more memory-intensive since every request is tracked individually.

Sliding Window Counter

A hybrid approach that approximates the sliding window log. It tracks request counts in adjacent fixed windows and computes a weighted estimate to smooth out boundary effects between intervals.

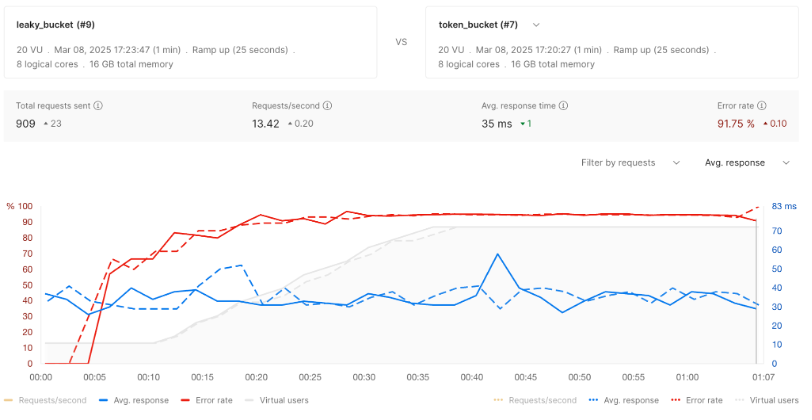

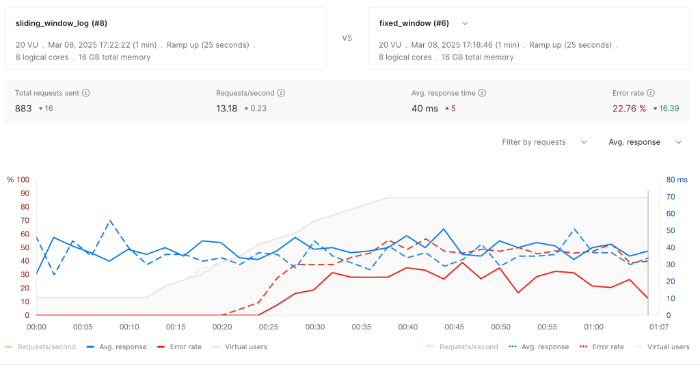

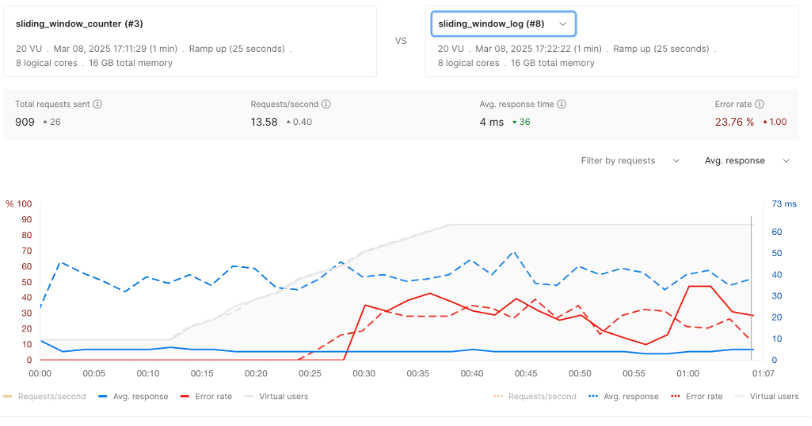

I used Postman to simulate traffic patterns and visualize performance by gradually increasing load followed by sustained peak traffic, to better observe how each limiter behaved under stress.

The bucket methods performed the worst, reaching max capacity very quickly and had high error rates. The sliding window counter maintained the lowest latency rates while maintaining similar package drop rates.

Creating the graphs and comparing results felt like a throwback to school and doing experimentation, something I’ve missed post graduation. Love me some metrics.

Some of the other projects I explored:

DNS Resolution

I’m pretty sure I had to implement this for my networking class but for some reason it clicked better when I did it a second time, perhaps because I was actually able to digest the concepts or perhaps because I like things when I do them out of my own volition.

NATS

Pub/sub architecture was cool to learn.

Real-time client

Found out I could talk to myself longer than is clinically sane.

Traceroute

Traceroute works by sending packets with increasing TTL (time-to-live) values. Sort of a duh realization I had there.

NTP

Time is something I take for granted and working with NTP made me more aware how complex the system for regulating time is. Oh and fun fact number two! Time is maintained by the vibration of cesium atoms!

I’ve had fun with these projects.

Enjoy Reading This Article?

Here are some more articles you might like to read next: